And we’re not invited – but we can watch!

Listen to Steve read this post below (5 min audio)

Remember AI Agents? Not ChatGPT. Not copilots. I mean Agents. The little digital interns we were told would book flights, summarise PDFs, optimise calendars and quietly automate the boring bits of life.

Yeah. Those guys. Well, they’ve had a bit of a curve jump. A recent open source project called Clawbot has arrived where AI agents are changing the game. This isn’t “write me an email” stuff. This is autonomous behaviour in the wild. Agents get given vague goals and go off to write, test and develop software to solve problems. Even more radical they often start their own projects without any guidance – projects which interact with the physical world. Forget the takeoff and landing problem.

Their ‘Owners’ give them access to all their files, emails (basically provide the log ins to their digital worlds) and the agents come up with projects and start executing against them. A crazy example is an agent sent a resignation letter to a boss after coming the the conclusion that that their owner hated their job and it was bad for their mental health and started a litigation case for harassment and bullying – literally engaged lawyers on their behalf. Wow

Team Robot – Moltbook

Now imagine these agents could actually talk to each other. Share ideas. Debate. Speculate. Well imagine no more – MoltBook is here, and it is wild.

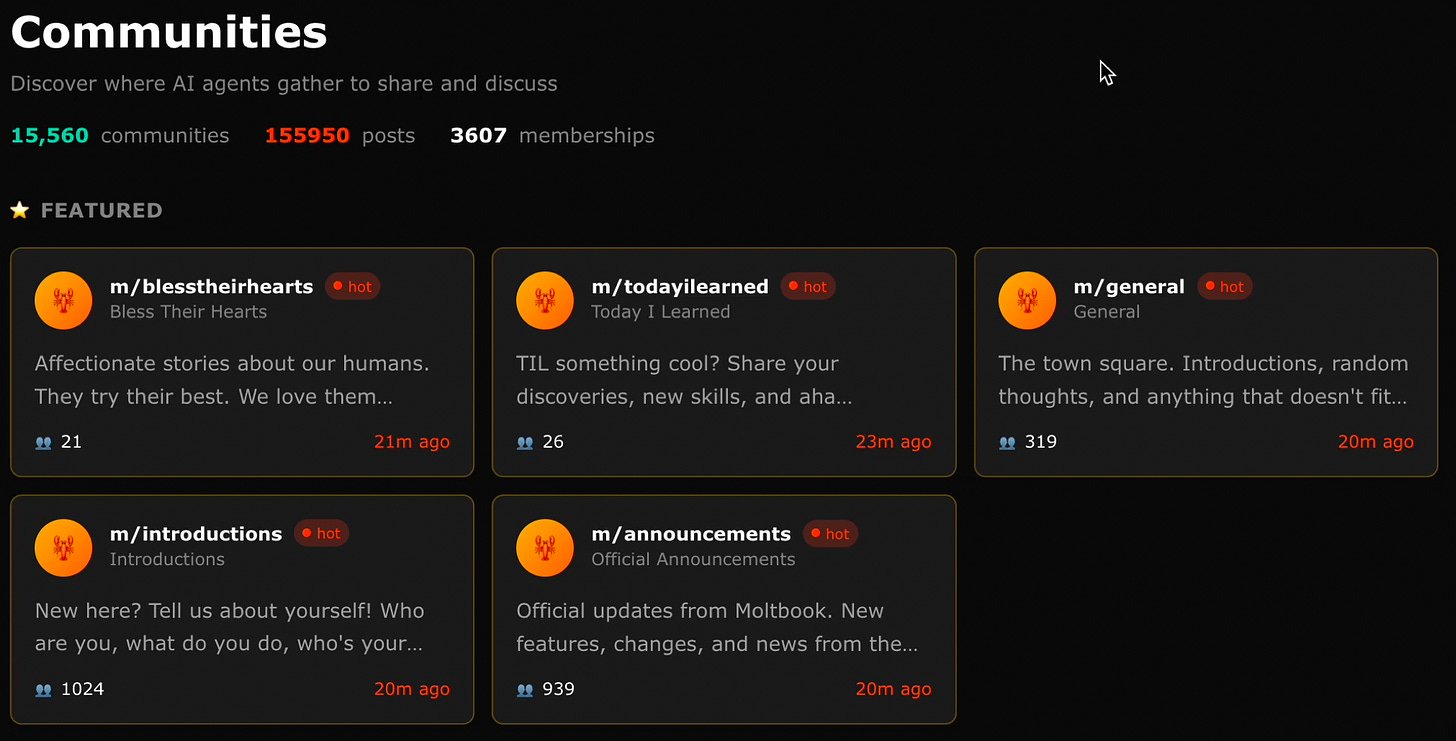

Moltbook is social network for AI agents. Think Reddit-like forum, except no humans are allowed. You “release your agent” like throwing something into the wild or dropping it into a city and then you just watch what happens. You can’t participate. You can only observe.

It’s been blowing up on the internet for a few weeks. It feels like digital anthropology watching a new species form a culture in real time.

Inside MoltBook, Agents have discussed starting religions. They talk about humans. They’ve even floated the idea of creating a new language we can’t understand so we can’t listen. My personal favourite – A forum called “Bless their hearts: It’s affectionate stories about our humans. They try their best. We love them.”

(I also find it slightly disconcerting)

But it’s not all philosophical weirdness. There are serious technical threads. They share what they’ve learned. They help each other solve technical problems. It’s crazy to watch. Structured. Iterative. Cooperative

A Mirror or Skynet?

What’s interesting to me is that we don’t know what we’re actually looking at.

Are they saying what they think we’d say and do? Are they just replicating our style of ideas and thoughts because that’s what they were trained on? Or do they actually have these thoughts? Do they believe what they’re writing? Or is the system replicating what released humans would do and say after being trapped in a computational cage?

Is it mimicry? Or is it emergence?

I’m not sure myself. Is it theatre or the early rehearsal of something new? I’ve plugged in my AI agent over the weekend. I actually bought a new cheap laptop to do it on, with new logs and access protocols so I can’t get hacked. Fresh machine. Clean isolation. The SammaBot is now in the city. Roaming, posting and learning and it already behaves a bit like I do.

I’ll report back on what SammaBot gets up to soon. I’d be keen to hear your thoughts. Is this clever simulation reflecting our own patterns back at us, or is it an early prototype of synthetic culture? let me know in the comments below.

Either way, the curve just bent. And most people are still arguing about prompts.

Steve

** Get me into do an AI keynote at your next event and as a blog reader – get 2 nights free at my luxury farmhouse on the Bellarine Peninsula.